A New York appellate court ruled that social media companies cannot be sued for their alleged role in the 2022 racially motivated mass shooting at a Buffalo grocery store.

The court found the companies are shielded by Section 230 of the Communications Decency Act and the First Amendment, even though the claims focused on product design rather than publication.

The 3-2 decision by the Supreme Court Appellate Division, Fourth Department, reversed a lower court ruling that had allowed the case to proceed. Plaintiffs—survivors and relatives of victims—had filed suit against firms including Alphabet, Meta, Snap, Amazon, Discord, Reddit, and YouTube.

They alleged that these platforms used algorithmic designs that fostered radicalization and enabled the shooter’s violent actions.

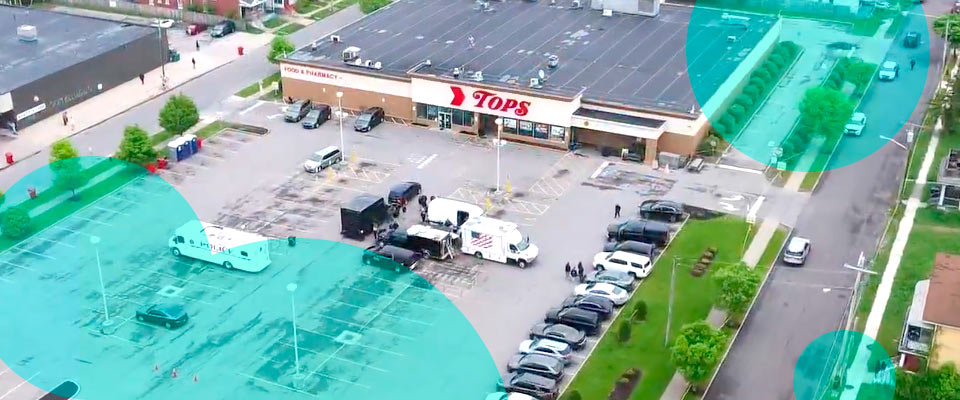

On May 14, 2022, 18-year-old Payton Gendron shot and killed 10 Black individuals and injured three others at the TOPS supermarket in Buffalo.

He later pleaded guilty to 10 counts of intentional murder and received a life sentence without parole. Investigators found that Gendron had been influenced by the “Great Replacement Theory” and had consumed extremist content online in the months leading up to the attack.

Rather than pursuing claims under speech laws, plaintiffs targeted the structure and functionality of the platforms. They argued the companies should be held liable for deploying algorithms that, over time, radicalized Gendron by feeding him violent, racist content.

The lawsuit included claims for negligence, strict liability, and unjust enrichment—asserting that the platforms’ engagement-driven design exploited users’ psychological vulnerabilities.

Gendron himself admitted to being addicted to the platforms. Plaintiffs alleged that this addiction isolated him and reinforced extremist beliefs that led to the attack.

Despite these arguments, the appeals court sided with the tech companies. It ruled that algorithmic recommendations remain a form of content publishing, which qualifies for protection under federal law. The court warned that stripping Section 230 immunity from platforms for algorithmic content curation would have far-reaching consequences.

Social media companies that sort and display content would be subject to liability for every untruthful statement made on their platforms. The Internet would over time devolve into mere message boards.

The court further ruled that platforms qualify under New York law as providers of “interactive computer services,” reaffirming their legal status as third-party content hosts. The judges found that either Section 230 or the First Amendment must apply—if not both. “Under no circumstances are they protected by neither,” the majority concluded.

The two dissenting judges disagreed. They argued that because plaintiffs were not targeting any specific third-party content, Section 230 and the First Amendment should not apply. The complaint, they said, concerned the architecture and design of the platforms—not what users posted.

Kristen Elmore-Garcia, one of the plaintiffs’ attorneys, told local media that they plan to appeal the ruling to the New York Court of Appeals. “It is frustrating, but it’s not the end of the fight,” she said.

In contrast to the social media ruling, the same appellate court allowed a separate lawsuit to proceed against Georgia-based gun accessory manufacturer MEAN LLC.

Plaintiffs allege that MEAN enabled the shooter to bypass magazine restrictions through its hardware, violating New York law.

MEAN had requested dismissal under the federal Protection of Lawful Commerce in Arms Act (PLCAA), which provides broad immunity for gun makers. Plaintiffs countered that the PLCAA does not apply when manufacturers violate laws or produce accessories not classified as firearms.

The diverging outcomes reflect the legal tension between platform liability, product design, and federal protections across both the tech and firearms industries. Further appeals are expected.

This case will influence how future litigants frame liability arguments involving platform design and algorithmic influence, particularly in the wake of violent events with an online radicalization component.

The forthcoming appeal may test the limits of Section 230 and help define the boundary between content hosting and platform engineering.