DeepInfra raised $107 mn in Series B funding to expand its inference cloud platform and scale compute infrastructure globally as enterprise AI workloads shift toward continuous inference demand.

The round was co-led by 500 Global and Georges Harik, with participation from A.Capital Ventures, Crescent Cove, Felicis, NVIDIA, Peak6, Samsung Next, Supermicro, and Upper90.

The company says token processing volume has increased 25 times since its Series A financing. That growth reflects a larger change happening across enterprise AI infrastructure. Training models still matters, though inference workloads increasingly dominate operational demand once systems move into production.

DeepInfra built its business around that assumption years ago. The company argues inference, rather than model training, will define the next phase of enterprise AI infrastructure economics.

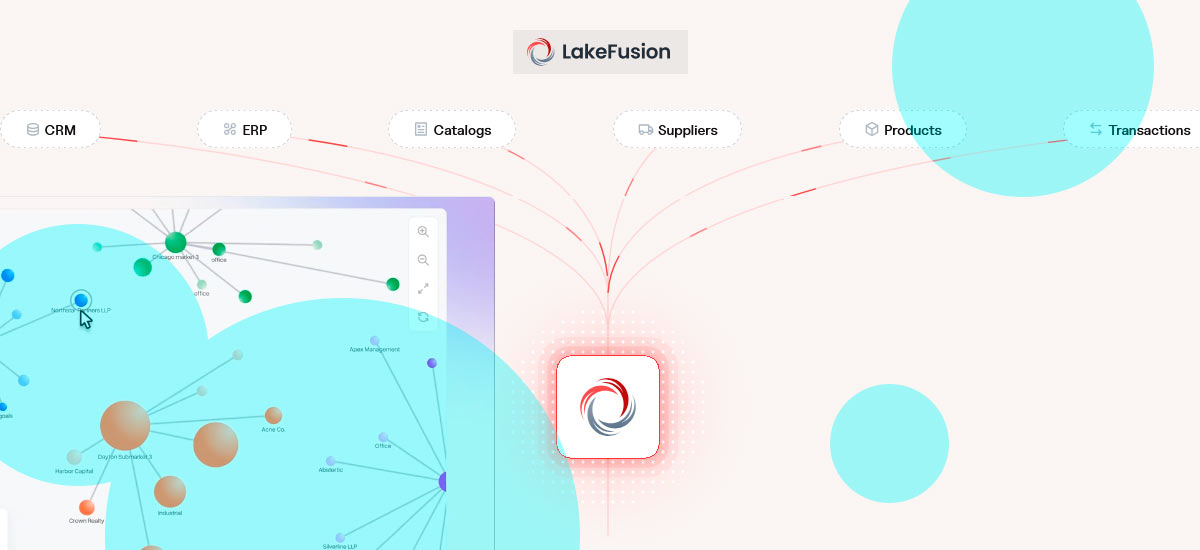

According to DeepInfra, two shifts now collide at once: open-source AI models are reaching performance levels closer to proprietary systems, while agent-based AI workflows generate constant high-volume token traffic.

Agentic systems place different pressure on infrastructure compared with conventional chatbot usage. A single AI agent task can trigger 50 to more than 100 model calls while operating continuously across workflows without idle gaps.

Traditional cloud platforms were designed around mixed workloads with uneven traffic patterns. AI inference workloads look different. They run constantly, generate heavy token throughput, and require predictable low-latency performance across distributed systems.

According to Beinsure analysts, inference infrastructure has become one of the fastest-growing AI infrastructure segments because enterprises increasingly care less about model training prestige and more about operational economics after deployment.

Token generation costs now affect production margins directly.

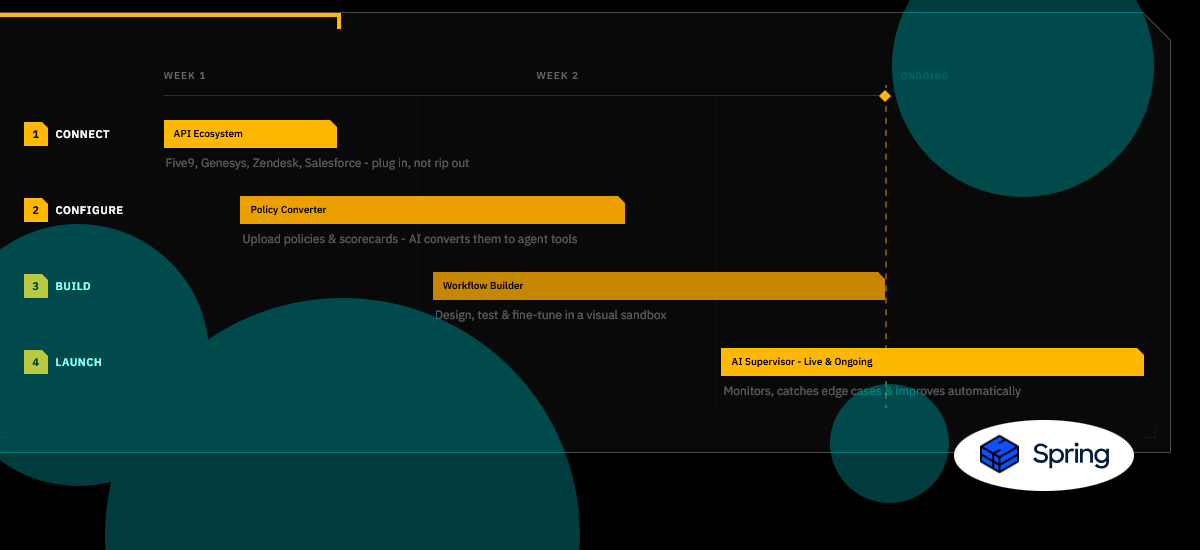

DeepInfra says serving inference efficiently requires control across hardware, networking, and software layers simultaneously.

The company built its stack around vertically integrated infrastructure optimized specifically for inference-heavy environments rather than general-purpose cloud computing.

The platform currently operates GPU infrastructure across eight US data centers, with additional international locations under development.

Unlike hyperscalers relying partly on rented or spot capacity, DeepInfra owns and operates its infrastructure directly from GPU hardware through API delivery layers. The company argues this structure improves latency consistency and infrastructure efficiency under continuous inference demand.

Its engineering background also shapes the architecture. Members of the DeepInfra team previously built imo, the messaging application used by more than 200 mn users globally.

That experience managing large-scale distributed systems feeds directly into how the company handles inference traffic.

DeepInfra also positions itself closely within NVIDIA’s broader AI ecosystem. The company supports NVIDIA Nemotron models, the NemoClaw agent framework, and NVIDIA Dynamo inference software.

Early deployments of Blackwell GPUs, along with future Vera Rubin systems paired with Dynamo, are expected to improve inference cost efficiency by as much as 20 times, according to the company.

The platform currently supports more than 150 open-source models through OpenAI-compatible APIs. DeepInfra says enterprise security remains a large focus as AI systems move deeper into production environments.

The company operates with zero data retention policies and maintains SOC 2 and ISO 27001 certifications.

The new capital will support three areas: expanding global compute capacity, improving developer tooling, and supporting next-generation open-source and agentic AI models as they enter production deployment.

AI infrastructure spending has increasingly shifted toward inference optimization during the past year. Training frontier models still captures headlines, though the operational side of enterprise AI now revolves around serving massive volumes of inference requests efficiently and cheaply enough to sustain real business workloads.

DeepInfra is betting this transition reshapes the cloud market itself. General-purpose infrastructure providers built around traditional compute demand may struggle as always-on inference traffic becomes the default workload pattern for enterprise AI systems.